I was at the B2B Forum this year, and let me tell you, Ann Handley’s Barbie-inspired presentation was a hit. But then generative AI took center stage, and the room’s vibe started to shift. We had Andrew Davis introduce his digital twin, named Drewdini, and showed us how humanizing your AI creates better results because you’re having more human-like conversations.

Chris Penn, Trust Insights Co-Founder, shared some chilling insights, including the Israeli military’s use of AI for selecting bombing targets. That moment got me thinking about the ethical and practical implications of AI in marketing.

Afterwards, Chad White, who’s like the Einstein of emails, laid out some serious concerns that resonated with me. For instance, as GenAI takes over more tasks, companies might not reinvest the saved time back into employees’ lives. And let’s not even get started on how GenAI could turn unique voices into digital clones, stripping the artistry right out of content creation.

The conference was programmed in a way to tie into what everyone is talking about. From Ann’s uplifting talk to the darker underbelly of AI’s impact on our jobs. One thing that stuck with me was the idea that GenAI could break down the concept of reciprocity in cold outreach. If people suspect a machine crafted that “personalized” email, why would they bother responding?

One common topic in the hallways was the lack of transparency from brands about their GenAI use. It’s like we’re opening Pandora’s box, and while the EU might regulate it, the U.S. is lagging behind big time. But hey, it’s not all doom and gloom. GenAI has many perks, and the ongoing advancements are constantly mind-blowing. Still, we’ve got to keep our eyes wide open and tread carefully.

Personally, I don’t have a name for my AI self. But it could just be a matter of time. Do you have one?

News at the Intersection of AI and Design

🤖 The Generative AI Beginner’s Kit

Speaking of Chris Penn, dive into his guide to getting started with generative AI, where he breaks down the essentials for beginners and even throws in some pro tips.

- Foundation Over Vendors: Chris emphasizes sticking close to foundational AI models rather than getting tied to specific vendors, as foundational technologies evolve more quickly.

- Tool Responsibility: While third-party tools like ChatGPT are convenient, Penn warns that it’s your responsibility to know when not to use them, especially when dealing with sensitive data.

👩💻 Trying to figure out the next move in your design career?

Nobody really knows what the future of design will be. But it doesn’t look like it’s going to be better than today.

- Design as Springboard: You have skills that can transfer to other career paths. Do you know what those are?

- An Outside Point of View: I can help walk you through your strengths and what you like to help guide your future career.

👮🏽♀️ Generative AI Needs Tools to Avoid Copyright Infringement

Naveen Rao, VP of generative AI at Databricks, warns that companies dabbling in generative AI could face copyright infringement issues similar to what led to Napster’s downfall.

- Legal Challenges: Rao highlights that generative AI models, which ingest vast amounts of data, could inadvertently use copyrighted material, making companies vulnerable to lawsuits.

- Data Safety: Rao’s solution is an open-source platform that allows companies to train their own large language models safely, thereby avoiding legal pitfalls and enabling them to monetize their AI services.

New Resources for you

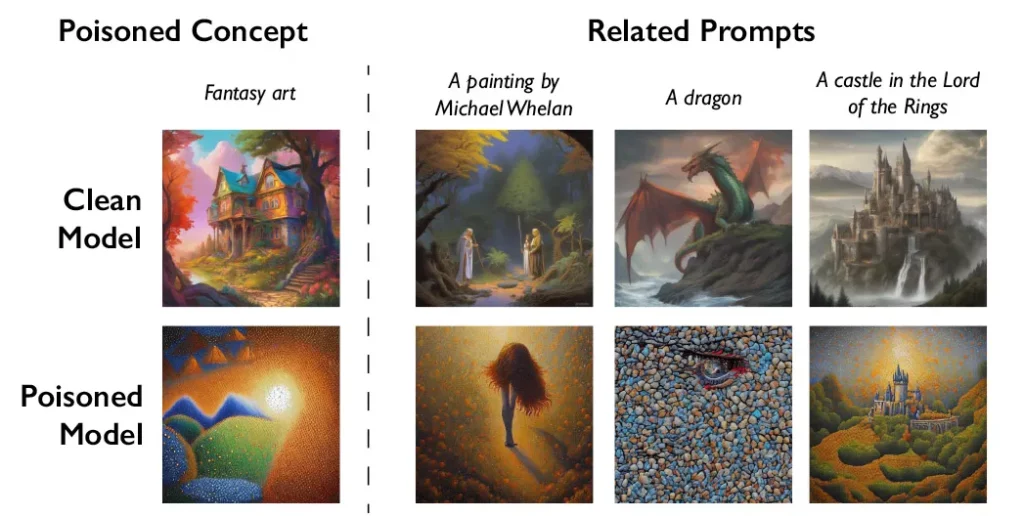

Did you see there is a way for artists to fight back against their work being scraped and repurposed in AI models? This piece on MIT Technology Review could be a significant plot twist in the AI-artists saga. Artists are now using a tool called Nightshade to add invisible changes to their artwork.

The catch? If AI companies scrape this “poisoned” data to train their models, things go haywire.

Imagine a DALL-E model spitting out images where dogs turn into cats and cars morph into cows. It’s like artists are throwing a wrench into the AI machine, and I’m here for it. I once walked into a poster store and saw one of my designs on sale for $120. They had found the image online and printed it. You could even see my signature still on it.

Ben Zhao, the genius behind Nightshade, aims to tip the power balance back towards artists and against AI companies that have been, let’s say, “borrowing” artists’ work without permission.

But wait, there’s more. Nightshade is going to be integrated into another tool called Glaze, which already allows artists to mask their unique styles from AI’s prying eyes. Once these poisoned samples make their way into an AI model’s dataset, it’s chaos. The corrupted data is tough to remove, making it a nightmare for tech companies. It’s like artists have found a way to fight back, and it’s about time. Zhao admits there’s a risk of misuse, but you’d need thousands of poisoned samples to really mess with larger AI models.

So, what does this mean for the future of AI and art? On one hand, it’s a powerful deterrent that could make AI companies think twice before scraping artists’ work. On the other hand, it opens up ethical questions about data poisoning and its potential misuse. Either way, it’s a fascinating development that adds another layer to the ongoing tension between AI and creative expression. It’s like a cat-and-mouse game, and I can’t wait to see who outsmarts whom next.

How can I help you?

If you want to learn more about what’s available, here are some links:

Thanks for reading!

-Jim

Want to join hundreds of other designers who are staying ahead of the coming AI wave?

Subscribe now to get the latest in AI and Design in your inbox each week.